Making AI ThinkThe Future of Intelligent Systems

Intelligence is not defined by the ability to produce answers, but by the ability to judge when answers are uncertain. Modern AI systems lack this capacity.

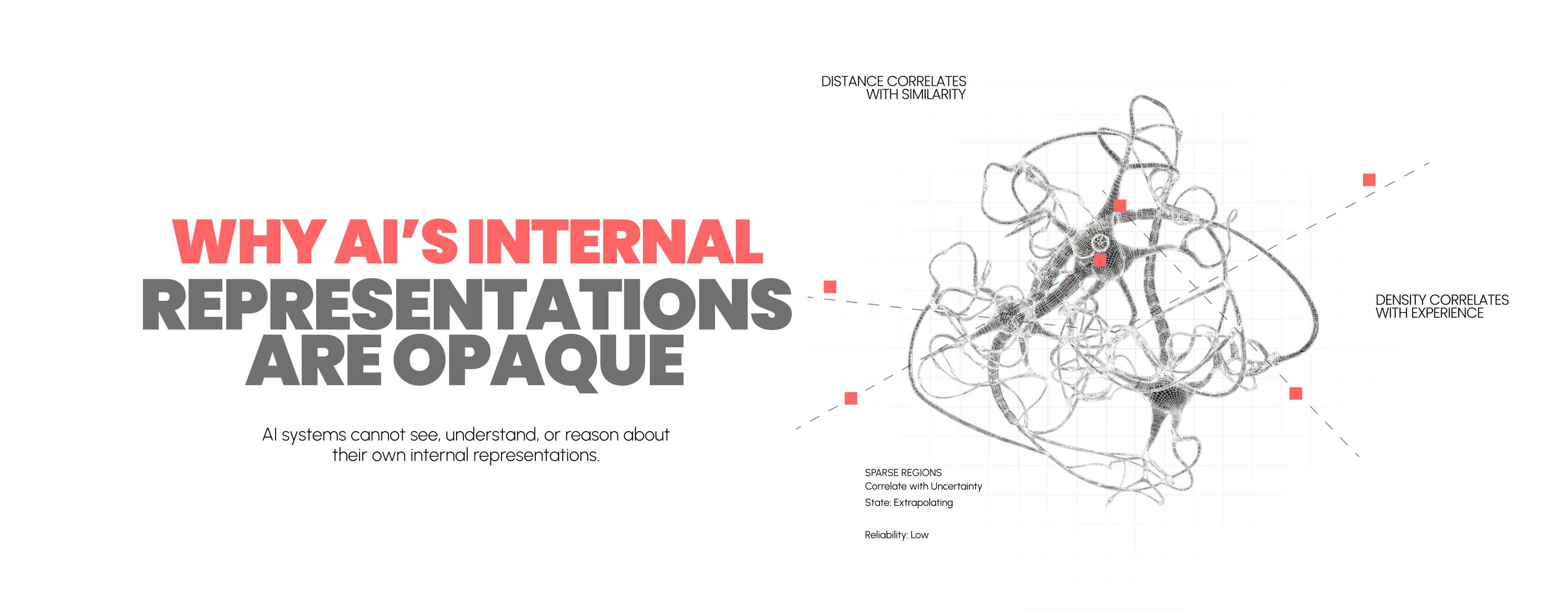

Why AI’s Internal Representations Are Opaque

Modern artificial intelligence systems have achieved remarkable performance across vision, language, and decision-making tasks.

Mapping the Mind of AI Understanding Decisions Before They Fail

One of the most persistent challenges in modern artificial intelligence is not the ability to make predictions, but the inability to understand why those predictions emerge.

Self-Correcting AI Anticipating Failure Before It Happens

The reliability of an AI system is not determined by the confidence of its outputs, but by the structure of its internal representations.